A gallery of great posters from great Hilfskrafts past and present:

https://www.khm.de/~sievers/sag_posters/index.html

A gallery of great posters from great Hilfskrafts past and present:

https://www.khm.de/~sievers/sag_posters/index.html

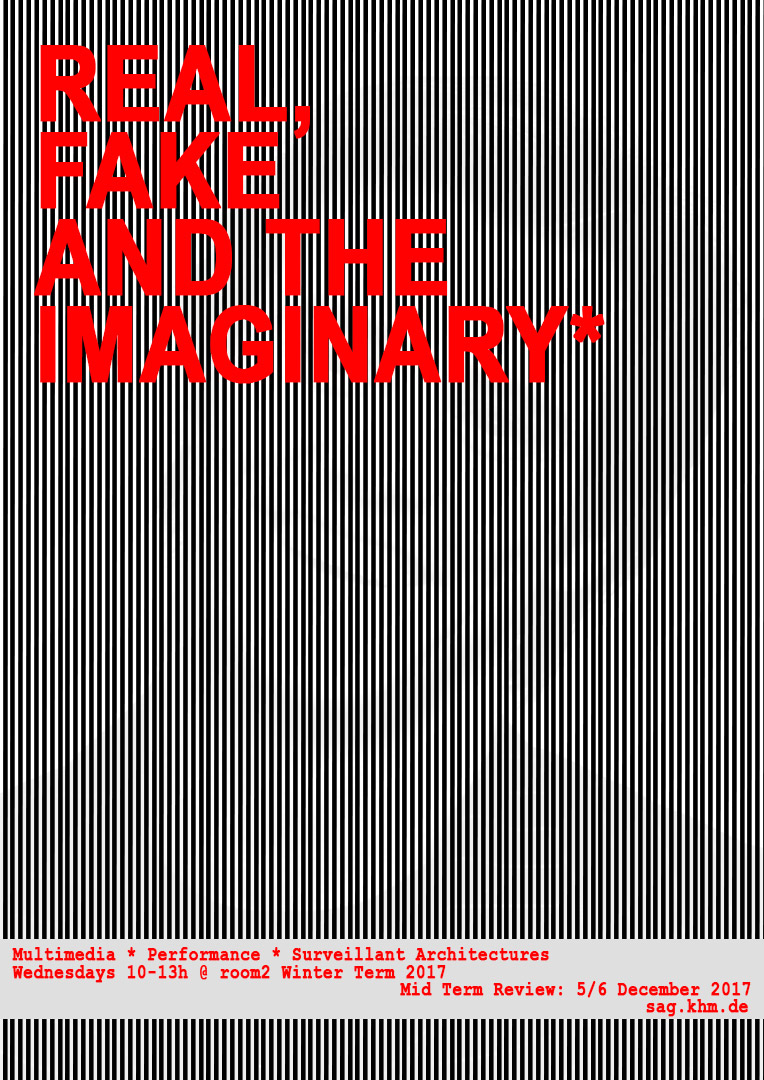

Following on from „The Body and the Network“, we explore historical and contemporary discourses and productions of „real“ and „fake“, as well as and their development into various cultural movements and commodities. Starting from personal observation, we focus on the slide from real to fake, and back. What new relationships are there to be had with the fake?

Following on from „The Body and the Network“, we explore historical and contemporary discourses and productions of „real“ and „fake“, as well as and their development into various cultural movements and commodities. Starting from personal observation, we focus on the slide from real to fake, and back. What new relationships are there to be had with the fake?

Tue May 9, 16.50 – 20.40 h / Wed May 10, 09.00 – 11.40 h, 2017

@ Hotel ibis Koeln Centrum, Neue Weyerstraße, Köln

30 minute slots, presenting work-in-progress in small hotel rooms

sign up with us if you’d like to present something, or just turn up on time to join the review

two days of intense command-line hackery. I really liked that moment when everybody realized at the same time that you can commandeer other people’s computers with ssh, and started changing passwords, hostnames or rebooted each other’s machines.

workshop worksheets are here: https://www.khm.de/~sievers/net/index.html

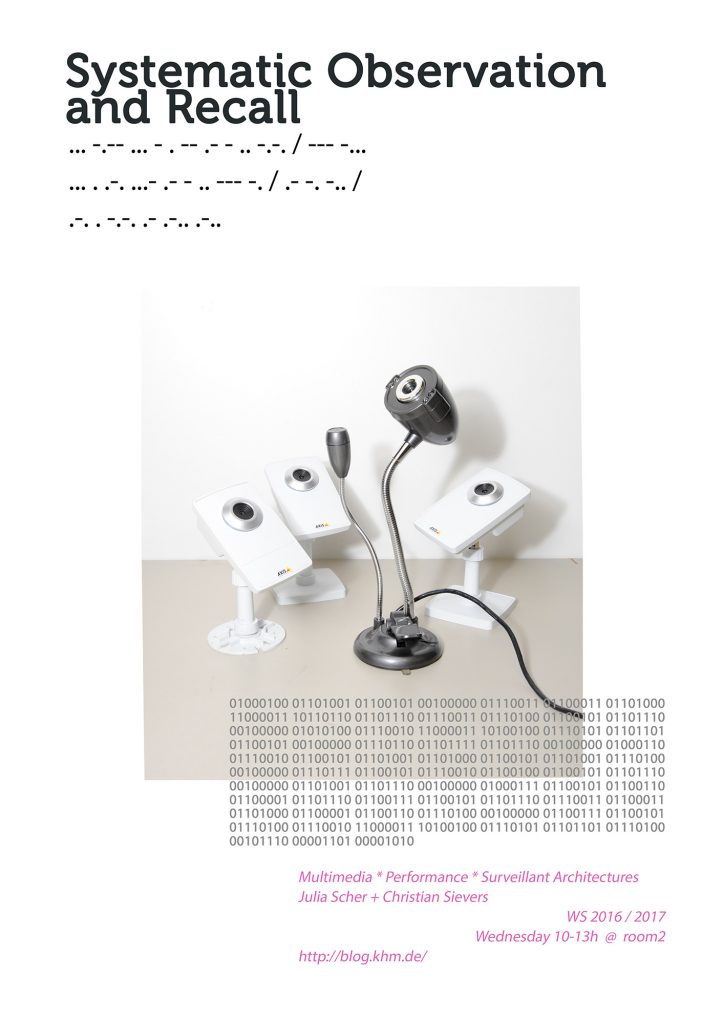

The Body and the Network

A discussion and production seminar, geared to critically engage contemporary surveillance and control measures in artistic practice. The focus is on the development of new methodologies, identities, and narratives, especially those that cross diverse media in a performative way.

Building on the winter term’s „Systematic Observation and Recall“ the seminar will look at body extensions in societies of control and how they manifest in networked media. This includes art works, network activity, performances or videos that reconfigure, refocuse and recontextualize current technologies of connection and display.

We will investigate new transient cultures of extending and marking the body for security, surveillance, pleasure and storytelling, and contemporary platforms for live display including the theatre, the databank and the MAX Patch.

Workshops:

Raspberry Pi Basics

A practical workshop with help from Martin Nawrath to configure and install network devices with the Raspberry Pi as a basis. Aimed at all experience levels, the workshop will introduce the basics of networking technology, the command line, scripting and automation, as well as working with external sensors.

Media for Live Events

Building on this, there will later on in the term be the opportunity to work with Prof. Larry Shea, from School of Drama, Carnegie Mellon University. In this several-weeks long workshop we‘ll use the new skills to imagine and build new networked and sensor-based set-ups for installations, performances and open scenarios, working towards Rundgang.

Mid Term Review: 9 &10 May 2017

Excursion to opening days of documenta 14

Required reading

– Nervous Systems, HKW Berlin, 2016, introduction by Anselm Franke, Stephanie Hankey and Marek Tuszynski (online)

– Out of Body, Skulptur Projekte Münster, 2016: The Body of the Web (online)

– Surveillance, Performance, Self-Surveillance. Interview with Jill Magid by Geert Lovink (online)

– Flesh and Stone, The Body and the City in Western Civilization, Richard Sennett, New York, 1994

Invited speakers

Geert Lovink: Medienkatastrophe, 20.4., 18 h, Aula

Simon Denny: Medienkatastrophe, 26.4., 19 h, Aula

Mark Bain

Prof. Larry Shea (June-July)

Michel Foucault, Discipline and Punish, especially the “Docile Bodies” chapter.

English translation freely available online: https://encrypted.google.com/search?hl=de&q=Michel Foucault Discipline and Punish filetype:pdf

(The German translation: Überwachen und Strafen is in the Semesterapparat, and there is a second copy in the library that you can take home: PHI I.1.2 – 28)

Gilles Deleuze – Postscript on the Societies of Control

Very short essay from 1990, available online, e.g. here or hereGerman translation in the Semesterapparat: PHI C.7 (DEL) – 76

Here’s a 20 minute video explaining the core concepts: https://www.youtube.com/embed/GIus7lm_ZK0 (try to ignore the background music and it’s pretty good)

Giorgo Agamben: What is an Apparatus?

PDF available online: https://duckduckgo.com/?q=Giorgio+Agamben+-+What+is+an+Apparatus

German translation: Was ist ein Dispositiv? in the Semesterapparat: PHI I.1.2 – 58

“According to the philosopher Giorgio Agamben, “subjectivity” is the result of an encounter between “living beings” and the “apparatus”—which he defines, following Michel Foucault, as technologies that possess the power “to capture, orient, determine, intercept, model, control, or secure the gestures, behaviours, opinions, or discourses of living beings.” Art, according to the approach of Nervous Systems, possesses in turn the power to release life from these apparatuses of capture—even if only for moments and in the imagination—thus undoing the current drift toward ever-greater systemic closure. It is in this realm that we can begin to assemble the fragments of lived experience historically, in order to observe the transformations of “the social” in the present, and the frontiers of its subsumption.

(from the introduction to the Nervous Systems catalogue, see below)

Nervous Systems exhibition 2016 @ HKW, Introductory essay in the catalogue

by Anselm Franke, Stephanie Hankey and Marek Tuszynski

The essay is freely available online from HKW

The entire catalogue is in the Semesterapparat: KUN B.6.14 – 813

poster by Nikolai

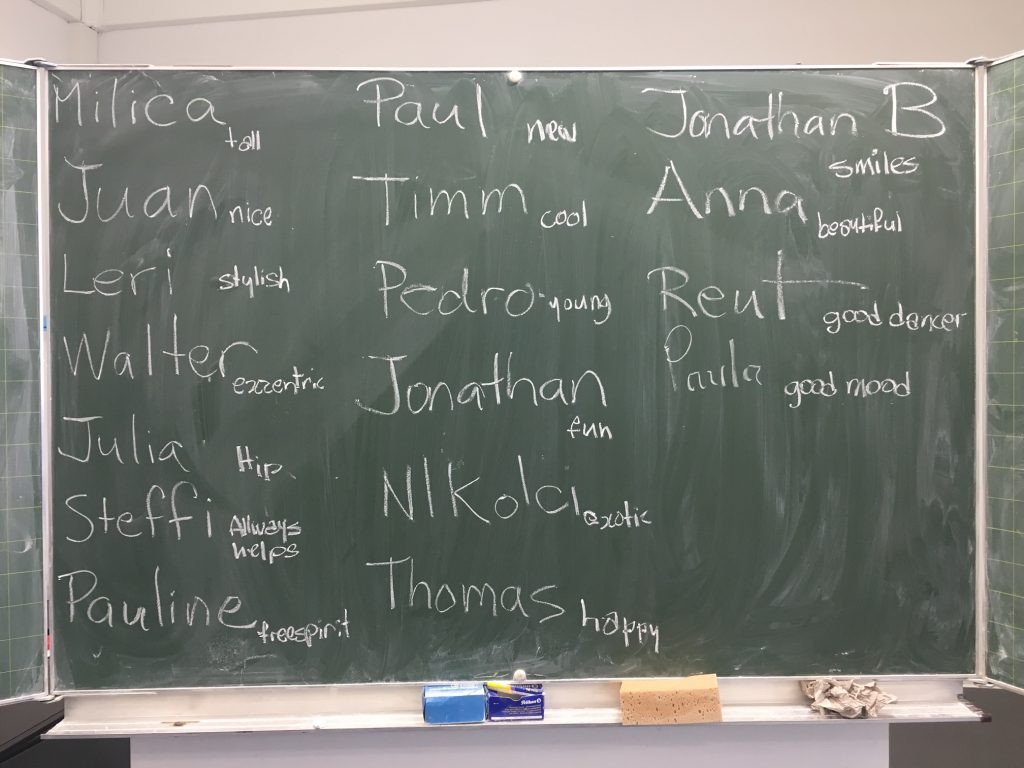

from using technology to find intimacy with one another, to intimacy with technology – SAG 27 Apr 2016

Good introduction to AI concepts: Plug and Pray – Von Computern und anderen Menschen – Dokumentarfilm von Jens Schanze 2009

https://de.wikipedia.org/wiki/Plug_%26_Pray

“My main objection was that when the program says ‘I understand’, then that’s a lie. There’s no one there. … I can’t imagine that you can do an effective therapy for someone who is emotionally troubled by systematically lying to him.” Joseph Weizenbaum, Plug and Pray documentary, from~ 30’30

“At the beginning of all this computer stuff, in the first 15 years or so, it was very clear to us that you can give the computer a job only, if you understand that job very well and deeply. Otherwise you can’t ask it to do it. Now that has changed. If we don’t understand a task, then we give it to the computer, who then is being asked to solve it with artifical intelligence. … But there’s a real danger. We’ve seen that in many, in almost all areas where the computer has been introduced, it’s irreversible. At banks, for example. And if we start to rely on artifical intelligence now, and it’s not reversible, and then we discover that this program does something we first don’t understand and then don’t like. Where does that leave us?” Plug and Pray documentary, 25’36 – 26’50 (an interview from 1987)

At the other end of the spectrum:

“We already have many examples of what I call narrow artificial intelligence … – and within 20 years, around 2029, computers will really match the full range of human intelligence. So we’ll be more machine-like than biological. So when I say that people say, I don’t want to become a machine. But they’re thinking about today’s machines, like this. Now we don’t want to become machines like this. I’m talking about a different kind of machine. A machine that’s actually just as subtle, just as complex, just as endearing, just as emotional as human beings. Or even more so. Even more exemplary of our moral, emotional and sprititual intelligence. We’re going to merge with this technology. We’re going to become hybrids of biological and non-biological intelligence, and ultimately be millions of times smarter than we are today. And that’s our destiny.” Ray Kurzweil, (from minute 6.30)

→ says we are going to MERGE with this technology. A longing to be expanded, connected, rescued from state of being cut off.

Kraftwerk, 1970s: We are the Robots https://www.youtube.com/watch?v=VXa9tXcMhXQ –– a longing to be one with the machine, machine-like (very different from James Brown’s idea though). Is this chiefly a male desire, to be free of the mess of emotional confusion, ambiguity?

→ also see Günther Anders, Die Antiquiertheit des Menschen, Band I, C. H. Beck, München 1956. “Promethean Shame”, man’s feeling of inadequacy in view of his creations.

Turing Test: https://en.wikipedia.org/wiki/Turing_test

A Turing Test we’re all familiar with: CAPTCHA: “Completely Automated Public Turing test to tell Computers and Humans Apart”) https://en.wikipedia.org/wiki/CAPTCHA

reCAPTCHA (human users exploited for machine learning) https://www.google.com/recaptcha/intro/index.html#ease-of-use

Contemporary example: Try anything hosted on Cloudflare via Tor, and you’ll have to prove you’re not a bot: https://support.cloudflare.com/hc/en-us/articles/203306930-Does-CloudFlare-block-Tor-

Designed as an artifical psychiatrist. So from the start the machine aims to make deep contact, get right into people’s minds. Also described as a “parody” of a therapist.

Emulation on a web server: http://nlp-addiction.com/eliza

Anectdote recounted by Charlie Brooker, author of Black Mirror (watched last time): “Joseph Weizenbaum, tthe inventor of one of the first chat bots, Eliza, had his secretary test her out. Shortly after she asked him to leave, because even though she knew it was a machine she was talking to, she was having a very intimate conversation and wanted to be alone.”

Sie: Sind sie ein Mensch?

Julia: Ich bin genauso echt wie Sie.

Sie: Warum sagen Sie, dass Sie echt sind?

Julia: Leider kann ich Ihnen keine weitere Begründung geben. Sie können mir aber eine neue Frage stellen!

Sie: Sind sie ein Mensch

Julia: Ich antworte, also bin ich! Real, virtuell, echt – macht das im Jahr 2016 noch einen Unterschied!?

Tries to sell you access to porn if you mention sex.

Eine künstliche künstliche Intelligenz stellt sich der Jury. Gelingt die Imitation, oder fliegt der Bluff auf? Ein Spiel am Rande des uncanny valley, das Einfühlvermögen in menschliche und unmenschliche Kommunikation auf beiden Seiten verlangt.

https://media.ccc.de/v/1c2-6610-reverse_turing_test

I’m being tested at 57min.

→ The training goes both ways: Computers condition us to become more like them.

‘In Turing’s dream scenario, chatbots will actually push us to be better conversationalists and clearer thinkers. As Will put it, reflecting on the chatbot experiment, “having Pinocchio-like robots that can think, feel and discriminate morally will broaden our concept of humanity, challenging us organic humans to be better, more sensitive, imaginative creatures.” Amen to that.’

→ DIALECTOR is so far the only bot that on its own decides to end a conversation.

The interesting and worrying part of the entire test was that it became a plausible, creative racist asshole. A lot of the worst things that Tay is quoted as saying were the result of users abusing the “repeat” function, but not all. It came out with racist statements entirely off its own bat. It even made things that look disturbingly like jokes. Antipope.org

- “’tech developments in other areas are about to turn the whole “sex with your PC” deal from “crude and somewhat rubbish” to “looks like the AIs just took a really unexpected job away'”.

http://www.antipope.org/charlie/blog-static/2016/04/markov-chain-dirty-to-me.html

There is no reason why bots won’t get linked to something like a masturbating machine. Tip: don’t google “AUTOMATED TELEDONICS”.

” For a lot of people I suspect the non-human nature of the other party would be a feature, not a bug – much like the non-human nature of a vibrator. “

The update explains what really happened: Non native speaker can play predefined voice snippets to have a ‘conversation’. It doesn’t say much about the much anticipated technological progress in AI, but it says a lot about new ways that people relate to each other.

“before this goes any further, I think we should get tested. You know, together.” “Don’t you trust me?” – “I just want to be sure.

Bottom line: aggressive, disruptive bots will be unavoidable, and might make a lot of the Internet as we know it know very toxic. What does Tay mean for future of politics + social media?

– who is responsible if a bot breaks the law? Will robots in future become be able to get punished for their actions? See Asimov’s Robot Laws. Also re “Samantha West”:

But the peculiar thing about Samantha West isn’t just that she is automated. It’s that she’s so smartly automated that she’s trained to respond to queries about whether or not she is a robot by telling you she’s a human. I asked [industry expert Chris] Haerich if there is a regulation against robots lying to you.

“I don’t…know…that…,” she said. “That’s one I’ve never been asked before. I’ve never been asked that question. Ever.”

→ Ashley Madison hack, turned out to the millions of men who signed up there were 70.000 fake profiles run by female bots, called “engagers”. This was part of a large fraud operation, tricking male subscribes into paying to see messages, get in „touch“.

http://gizmodo.com/almost-none-of-the-women-in-the-ashley-madison-database-1725558944

http://gizmodo.com/how-ashley-madison-hid-its-fembot-con-from-users-and-in-1728410265

is anyone home lol http://www.kunsthauslangenthal.ch/index.php/bitnik-huret.en/language/de.htm

In their new project for Kunsthaus Langenthal, !Mediengruppe Bitnik uses Bots, that is, programmes executing automatic tasks, thereby often simulating human activity. In their project, tens of thousands of Bots, hacked from a dating platform, where they feign female users, will emerge as a liberated mass of artificial chat partners.

https://www.youtube.com/watch?v=mAf6zKRb1wI

http://www.ubu.com/film/acconci_theme.html

Look we’re both grown up, we don’t have to kid each other. I just need a body next to mine. It’s so easy here, so easy. Just fall right into me. Come close to me, just fall right into me. It’s so easy. No trouble. No problems. Nobody doesn’t even have to know about it. Come on, we both need it, right? Come on.” Stops tape. “Oh I know you need it as much as I do. Dadadadadada that’s all you need. Come on.

Dadadadadada that’s all you need. Come on.

Dadadadadada that’s all you need. Come on.

I know I need it, you know you need it. … we don’t have to kid ourselves. We don’t have to say this is gonna last. All that counts is now, right? My body is here. You body can be here. That’s all we want. Right?

for those that missed today’s seminar, here are my notes and some links

as an example of a corporation that needs to intimately get to know you. They ask questions to get a (good enough) image of who you are, so they can recommend the right match.

Try it, just sign up https://www.okcupid.com (but use a temporary email and I’d say not when logged in to your everyday browser. I did it via Tor and using Spamgourmet https://www.spamgourmet.com/index.pl?languageCode=EN )

Here’s another way to flesh out your digital double: https://www.okcupid.com/tests/the-are-you-really-an-artist-test

(by the way, here are my results:

So does that mean that first you’d have the Data Doubles falling in love, then the real life yous just have to follow suit?

Some notes on dating algorithms and methodology by Christian Rudder, statistician

http://blog.okcupid.com/index.php/we-experiment-on-human-beings/

“The ultimate question at OkCupid is, does this thing even work? By all our internal measures, the “match percentage” we calculate for users is very good at predicting relationships. It correlates with message success, conversation length, whether people actually exchange contact information, and so on. But in the back of our minds, there’s always been the possibility: maybe it works just because we tell people it does. …”

The result:

“When we tell people they are a good match, they act as if they are. Even when they should be wrong for each other.”

Some more about falling in love: Experimentational Generation of Interpersonal Closeness

A psychological study: You only need to answer 36 questions to establish intimacy and trust. “Love didn’t happen to us. We’re in love because we each made the choice to be.”

Article: http://www.nytimes.com/2015/01/11/fashion/modern-love-to-fall-in-love-with-anyone-do-this.html

Paper: http://psp.sagepub.com/content/23/4/363.full.pdf+html

“I first read about the study when I was in the midst of a breakup. Each time I thought of leaving, my heart overruled my brain. I felt stuck. So, like a good academic, I turned to science, hoping there was a way to love smarter”

Read: a way to make love safer and more convenient (the drive behind all of this IMHO).

…

https://www.youtube.com/watch?v=ld9m8Xrpko0

The whole episode is online on Youtube if you want to re-watch. The DVD box set is in our Semesterapparat in the library.

It talks about, among other things, love, death and bereavement. It’s fairly didactic also, in the way it explains the limits of Facebook’s way of constructing your Data Double (i.e. when real-life Ash says he’s sharing the image of himself because it’s “funny” and the Ash the fleshbot then repeats it as face value.)

Charlie Brooker, creator of the series, explains in a panel discussion on the ideas that led to writing this story:

“I was spending a lot of time late at night looking at Twitter, and I was wondering, what if all these people were dead. Would I notice?”

Basically people’s reactions and posts are so formulaic that they’re entirely predictably. So we might as well get a robot to do it.

“Also there is the story of the inventor of one of the first chat bots, Eliza. He had his secretary test her out, and shortly after she asked him to leave, because even though she knew it was a machine she was talking to, she was having a very intimate conversation and wanted to be alone.”

…

So it seems there is a huge market for intimacy with robots out there. Presumably it’s going to become a lot more visible soon. David Levy is a computer scientist who has done decades of research. In his book “Love and Sex with Robots” he recommends sex robots as the solution to many of our problems.

Press response see i.e. https://www.theguardian.com/technology/2015/dec/13/sex-love-and-robots-the-end-of-intimacy

…

The most prominent and profound critic of this development is Sherry Turkle, Professor of Social Studies of Science and Technology at MIT. In her work she focuses on human-technology interaction, and used to praise the Internet for the freedom it gave people in re-inventing themselves, trying out different personas. In recent years she has become increasingly critical of the way that technology limits the depth of human communication and interaction.

See her book “Alone Together” (in the library):

Facebook. Twitter. SecondLife. “Smart” phones. Robotic pets. Robotic lovers. Thirty years ago we asked what we would use computers for. Now the question is what don’t we use them for. Now, through technology, we create, navigate, and perform our emotional lives.

We shape our buildings, Winston Churchill argued, then they shape us. The same is true of our digital technologies. Technology has become the architect of our intimacies.

She starts the book with research about the emotional investment people make in toy robots. Describes how her research again and again has shown that people are more than happy to confide in robots and enter into intimate relationships with them.

People often find that robots are actually preferable to a live person. Unlike real pets, robot puppies stay puppies for ever. Your sex robot will always be young, willing, and only be there for you (and won’t think you have strange desires or are a bad performer). According to Turkle, the problem is that this is a reduction of the bandwidth of human experience as we used to know it. She quotes from her research with teenagers: “texting is always better than talking”, as it’s less risky. Risk-avoidance is at the heart of the desire for intimacy with robots. (Again, security and convenience.)

Interaction with robots is sold as “risk free”, whereas “Dependence on a person is risky – it makes us subject to rejection – but it also opens us up to deeply knowing each other.”

“The shock troops of the robotic moment, dressed in lingerie, may be closer than most of us have ever imagined. … this is not because the robots are ready but because we are.”

It’s in the library! From the introduction to the book: http://alonetogetherbook.com/?p=4

A quick TED talk about the ideas and research behind the book: TEDxUIUC – Sherry Turkle – Alone Together: https://www.youtube.com/watch?v=MtLVCpZIiNs

…

A talk by Gemma Galdon-Clavell https://www.youtube.com/watch?v=0eifMYCfuBI. My rough notes:

“who do you think you are? who do you think the person next to you is? …

identities are a complex thing. we mess with our identities, we play with them, we’re not the same person at a job interview than when going out at night to party. we choose to show different things, evolve over time.

data: fixes things. States need fixed things.

not too long ago, the amount of personally identifiable data (PII) was limited. when you crossed a border. when you registered a car. when you got a speeding ticket. that kind of info got stored by the state. back then only the state was big enough to need a UID to track you.

now: is stored by a large number of actors: shopping (loyalty cards, credit cards), entertainment (video streaming “rental”, music streaming, online game platforms), social media (making up ~60 to 75% of total traffic), smart phones (full of sensors & apps. have sensors than can be used for more than you can imagine )… we leave data traces all the time and we have no control. we have no way of knowing where the data goes, it gets sold on, or is held in storage silos because people think it’s tomorrow’s oil. Companies might even not know what to do with it, but they gather it anyway now. They keep it just in case.

Data doesn’t just sit there but it’s being used in new and dynamic ways, all to build a model of you that is as exact as possible: the Data Double. You, in data. When you enter any business transaction with companies, they don’t make decisions on you, but on what they can learn about you from their databases. You think you’re sitting down with your banker, talking about that loan, but really the decision that he’s going to make is based on your credit scoring. Not how compelling you are in presenting your ideas. The score is presented to them in a color, they’ll just see a green or red light, and won’t even be able to find out how that rating came about. You’re trying to create an interaction, and the decision has been made beforehand. Same with web sites who decide how to interact with you based on the cookies in your browser.

example dating site: answer a few questions (this is usually done by cookies on other web sites). Based on those the dating site will decide who you are and provide recommendations what to avoid and what to look out for. So the data double is not only a representation of yourself, but it’s also shaping your future self, because suddenly your options have reduced drastically, and your perspective has narrowed.

states love data doubles, because it’s a lot easier to deal with data than it is to deal with people. People are complex, messy, can be annoying. Data is stable, fixed, doesn’T yell back at you. High temptation to substitute people with data (“The data gives me a good idea of what the people want that I represent in parliament”)

Increasing pressure to conform to the image that the data has about me. Example credit card fraud detection: do something unusual and it’ll flag it as probably fraudulent and won’t allow it.

Ends with: can I ever get out of this cage again? Does the data double forget? Forgive? (doesnt look like it). So: until we have the legal tools to deal with this direclty and fairly, the solution is sabotage. only give up data if it profits you.

Bottom line: most of it is being used for either advertising and/or prediction. To read more about the details, see i.e. here: Epic.org: Privacy and Consumer Profiling.

Come to our Cryptoparty on May 19 to learn about self-defense measures.